A chatbot (or chatterbox or talking robot) is a computer program that’s designed to carry out a conversation with an actual person. While chatbots are popping up all over the place lately — from your smartphone to starring roles in Hollywood — they aren’t new. In fact, chatbots predate the Internet.

The first one, ELIZA, was created in 1966 by Joseph Weizenbaum at MIT’s Artificial Intelligence Laboratory. Designed to mimic a psychotherapist, ELIZA is still around today to listen to you talk about your feelings and ask you a ton of questions.

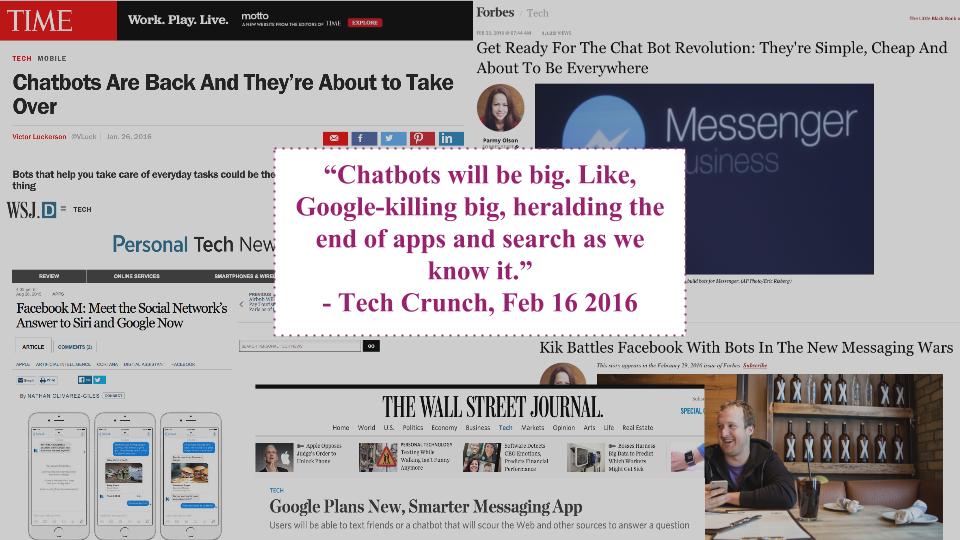

So why are chatbots suddenly going mainstream now?

Better tech begets smarter talk

Technological progress in artificial intelligence, particularly in natural language processing, means that chatbots are much more versatile and “smarter” than ever before. ELIZA’s simple ability to rephrase the things it’s told and ask questions about them is powered by “pattern matching,” which essentially uses keywords in a human’s statement to trigger a predetermined response. The purpose of chatbots like ELIZA, and later iterations like ALICE, is basically just to keep the conversation going.

Today’s chatbots can go way beyond pre-programmed responses. “Machine learning” capabilities enable a chatbot to evolve in response to previous conversations. So the more you talk to it, the smarter and more conversant it gets.

Unlike C-3PO and R2-D2, chatbots are virtual assistants who exist only in cyberspace, but they can be just as helpful as their Star Wars counterparts.

One recent example embodies both the powerful advancements in artificial intelligence and the risk of what can go wrong when machine learning gets hijacked by bad influences.

Microsoft recently released Tay, a chatbot that specialized in speaking (and tweeting) the slangy language of teens. Initially, Tay sounded a lot like a friendly, polite, informal teenager, but Microsoft had to shut Tay down after less than 24 hours because targeted attacks by Twitter trolls turned her into a sexist, racist bigot. Basically, because Tay was bombarded with a high volume and frequency of offensive material, she “learned” and began saying offensive things herself.

While Tay’s unintended derailment had her creators going back to the drawing board to protect her against a similar attack in the future, the argument can be made that perhaps she’s actually “more human than we’d like to admit.” After all, teenagers tend to be susceptible to peer pressure and can fall into the wrong crowd.

Bigger market means more opportunity

On a broader level, Tay’s existence does offer a clue about why chatbots are turning up everywhere. Tay’s target demographic — 18- to 24-year-olds — is driving a new digital paradigm. Following up on the shift from desktop to mobile as the dominant digital platform, messaging apps are beginning to replace web browsers as the go-to place to get things done. Six of the top 10 most used apps globally are messaging apps. Good old-fashioned SMS — texting — is the most used app of them all, with more than 4 billion active users.

News flash: Teens don’t talk on the phone (or email), they text.

Consequently, chatbots are perfectly suited to engage the billions of people who are increasingly using messaging rather than traditional Internet technologies like search and browsers. Chatbots allow you to gather information, buy products, compare travel options and more, all without interrupting the flow of conversation. In fact, chatbots are powered by the idea that it’s much more natural to just say what you want to happen than it is to open a browser window and search for the right website, where you then have to fill out a form to place an order, and another form to pay for it, and so on.

Siri, Apple’s personal assistant, can do some of these things already, and other major global players like Google and Facebook are scrambling to capitalize on the rise of chatbots. The messaging climate isn’t as advanced in the United States as it is in China, where people can do all kinds of useful things within WeChat. Nonetheless, some companies are positioning themselves to capitalize in the U.S. Kik has 240 million users, and its founder believes that Kik’s use of chatbots can help the company unseat Facebook as the dominant mobile platform. If you’re interested in seeing how schools use them, you can request a demo here.

Chatbots don’t need to seem “human,” they just have to be useful

So how do you judge the value of a chatbot? It really depends on what you want it to do. If you’re trying to “fool” someone into thinking your chatbot is actually a person, the Turing test is the ultimate measure of success. To pass the Turing test, a chatbot must be able to converse with a human judge and convincingly seem like a person to the extent that the judge can’t tell that it’s in fact a robot — a tall task indeed.

However, if you’re not concerned about having your chatbot “pass” as human, you can employ it to do all kinds of useful things. In fact, embracing the “bot-ness,” rather than trying to seem human, is actually more appealing for users.

“If I were a product manager, I would make sure to build a bot that was overtly bot-like. Name it Something-Bot,” bot expert Samuel Wooley told Mixpanel. “I’ve seen in my research that people love bots for their bot-ness. It’s when bots try to be overly human that people get frustrated with them.”

The utility of chatbots is as diverse as the entities that employ them. The U.S. Army uses SGT STAR as a “virtual guide” and recruiting tool that can answer lots of questions about the Army. Imperson is building chatbots patterned after personalities on TV shows and movies to allow fans to “talk” to their favorite characters like Iron Man and Miss Piggy (and enable the entertainment industry to market their products and drive consumers to buy tickets and tune in to their programming).

But chatbots’ usefulness isn’t limited to pure entertainment value or delivering products and information to consumers. Bots can also help companies interact directly with their customers, not to mention gather information and solicit feedback.

It might seem counterintuitive, but people — especially young people — are apparently more willing to be open and honest when talking to a bot. Fred Conrad, a cognitive psychologist and director of the Program in Survey Methodology at the University of Michigan Institute for Social Research, told The New Yorker that people are “more likely to disclose sensitive information via text messages than in voice interviews.”

Even ignoring the fact that it’s much easier to send a text message than it is to get someone on the phone (Do you answer robocalls or listen to “Congratulations! You have been selected to…” voicemails?), it turns out that people don’t worry about being judged by a robot and consequently are less guarded in conversation.